How Ensemble Learning Improves Lead Scoring Accuracy

Use bagging, boosting, and stacking to reduce errors, raise AUC-ROC, and improve lead scoring precision and recall.

Use bagging, boosting, and stacking to reduce errors, raise AUC-ROC, and improve lead scoring precision and recall.

Use bagging, boosting, and stacking to reduce errors, raise AUC-ROC, and improve lead scoring precision and recall.

Table of Contents

How Ensemble Learning Improves Lead Scoring Accuracy

Ensemble learning transforms lead scoring by combining multiple machine learning models to deliver more accurate predictions. Predictive lead scoring vs. traditional methods often highlights why manual systems miss the mark, wasting time on low-potential leads or ignoring high-value prospects. Ensemble techniques like bagging, boosting, and stacking address these issues by reducing errors and processing complex datasets effectively.

Key takeaways:

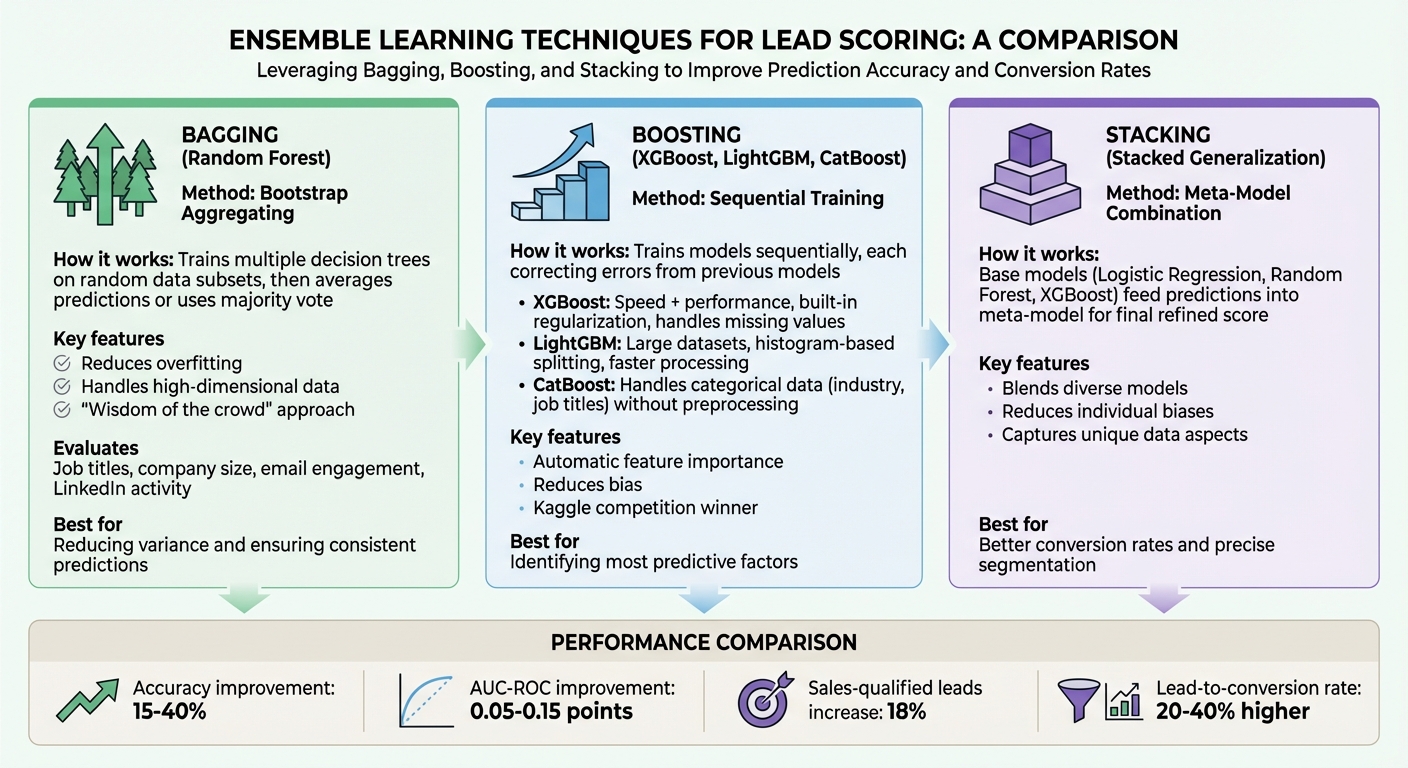

- Bagging (e.g., Random Forest): Reduces overfitting by averaging predictions from multiple models.

- Boosting (e.g., XGBoost, LightGBM, CatBoost): Sequentially improves accuracy by focusing on correcting prior errors.

- Stacking: Combines diverse models to refine predictions further.

These methods improve metrics like AUC-ROC and recall, ensuring fewer missed opportunities and better alignment between sales and marketing teams. Tools like SalesMind AI can automate this process, providing real-time lead scoring updates and actionable insights.

Ensemble Learning: The Power of Multiple Models Working Together | Uplatz

sbb-itb-817c6a5

How Ensemble Learning Improves Lead Scoring Accuracy

Ensemble learning tackles the limitations of single-model approaches by combining multiple models, often referred to as "weak learners", into a unified system. This approach produces predictions that are more reliable and precise than what any individual model can achieve on its own [3]. As a result, it significantly enhances lead scoring accuracy.

Reducing Bias and Variance

Prediction errors generally stem from three factors: Bias², Variance, and Irreducible Error [3]. Ensemble methods systematically address bias and variance. For example, Bagging (Bootstrap Aggregating) creates multiple versions of the same model by training them on different random subsets of the data. By averaging the predictions, it reduces noise and avoids overfitting, which is a common issue in high-variance models like decision trees [2][3]. Random Forest, a widely-used bagging technique, has been shown to cut misclassification errors by up to 30% compared to a single decision tree [3].

On the other hand, Boosting works by training models sequentially, with each iteration focusing on correcting errors made by the previous models [2][3]. This step-by-step refinement helps the ensemble address "hard-to-predict" cases, reducing overall bias while mitigating the influence of outliers or noisy data in lead engagement metrics. Boosting techniques can improve predictive accuracy by 10–20% with each successive round, as the model becomes increasingly adept at handling difficult cases [3].

Together, these methods not only reduce errors but also enable the system to better interpret intricate data patterns.

Processing Complex Datasets

Lead datasets often come with their own set of challenges. They may include irrelevant details, unusual outliers, and high-dimensional features such as job titles, company size, industry, engagement metrics, and LinkedIn activity. Bagging methods like Random Forest are particularly effective in handling these complexities. By reducing variance, they ensure more accurate predictions when analyzing high-dimensional data [2]. Additionally, the diversity within an ensemble allows it to capture various aspects of the data's underlying structure, making it better equipped to identify complex patterns that might confuse a single algorithm [2]. This makes ensemble learning a powerful tool for reliable lead qualification.

Measured Accuracy Improvements

These technical advantages translate into tangible improvements in lead scoring performance. Ensemble methods can enhance accuracy by 15–40% [2]. This improvement is reflected in metrics like the Area Under the ROC Curve (AUC-ROC), which assesses how well the model differentiates between high-value and low-value leads [2]. In cases where datasets are imbalanced - where qualified leads are significantly outnumbered by unqualified ones - ensembles improve recall (sensitivity), ensuring fewer valuable leads slip through the cracks [2]. By combining the variance reduction of bagging with the bias reduction of boosting, ensemble methods create a scoring system that's both precise and resilient, even when dealing with the messy, unpredictable nature of real-world lead data.

Main Ensemble Learning Techniques for Lead Scoring

Ensemble Learning Techniques for Lead Scoring: Bagging vs Boosting vs Stacking

Ensemble methods like bagging, boosting, and stacking are game-changers for lead scoring. By combining the strengths of multiple models, they improve prediction accuracy and reliability, addressing the limitations of traditional single-model approaches.

Bagging Methods (Random Forest)

Random Forest relies on bootstrap aggregating, or bagging, to train multiple decision trees using different random subsets of your lead data[4]. Each tree independently evaluates features such as job titles, company size, email engagement, and LinkedIn activity. The final lead score is determined by either averaging the predictions or taking a majority vote across the trees, which you can then verify using a lead scoring calculator. This "wisdom of the crowd" approach helps reduce overfitting and ensures more consistent predictions[4].

Boosting Methods (XGBoost, LightGBM, CatBoost)

Boosting works by training models sequentially, with each new model correcting the errors made by the previous ones[6][7]. In lead scoring, three boosting algorithms stand out:

- XGBoost: Known for balancing speed and performance, XGBoost includes built-in regularization to minimize overfitting and can handle missing values automatically.

- LightGBM: Designed for large datasets, LightGBM uses histogram-based splitting and leaf-wise tree growth, making it faster and more efficient when processing extensive lead data.

- CatBoost: Specializes in handling categorical data, like industry types or job titles, without requiring manual preprocessing.

These algorithms also compute feature importance automatically, helping you identify which factors - like customer behavior or demographic details - are most predictive of a qualified lead. Boosting techniques are widely recognized for their success, even in competitive settings like Kaggle[6].

Stacking for Better Predictions

Stacking, or stacked generalization, takes ensemble learning to the next level by combining predictions from multiple models. For instance, base models like Logistic Regression, Random Forest, and XGBoost are trained on your lead data. Their predictions are then fed into a meta-model, which learns how to best combine these outputs into a final, refined lead score[4].

The beauty of stacking lies in its ability to blend diverse models, each capturing unique aspects of the data. This reduces individual biases and often leads to better lead conversion rates and more precise segmentation. By integrating multiple perspectives, stacking ensures a well-rounded and effective scoring system.

How to Implement Ensemble Learning for Lead Scoring

If you're ready to leverage ensemble learning to enhance lead scoring accuracy, here's a step-by-step guide to implement it effectively.

Preparing and Cleaning Your Data

Start by gathering data from multiple sources like your CRM, marketing tools, website analytics, email campaigns, and LinkedIn engagement logs [5]. This gives you a complete view of each lead’s interactions and behavior.

Focus on data quality over sheer volume. Eliminate duplicate entries, standardize formatting, and resolve inconsistencies across systems. For a solid foundation, you’ll need a minimum of 40 qualified leads and 40 disqualified leads from a timeframe that aligns with your sales cycle - this could range from three months to two years [8]. Use feature engineering and AI prompts for lead generation to transform raw data into actionable insights. For instance, instead of just tracking "time spent on the website", calculate a "lead engagement score" to make the data more meaningful for your model [5].

Once your data is clean and enriched, you're ready to move on to model selection and training.

Selecting and Training Your Models

Platforms like Amazon SageMaker Autopilot streamline the process of model selection. These tools automatically test multiple machine learning algorithms and ensemble combinations to find the best fit for your dataset [9]. This automation can save you weeks of manual trial and error.

Dennis Liang from AWS Builder Center highlighted, "Amazon SageMaker AutoPilot allowed us to rapidly experiment with multiple machine learning models and ensembles over unclean data" [9].

When training your model, split your data chronologically. Train it using historical leads (e.g., leads created before a specific date) and test it on newer leads to simulate future scenarios [9]. Use AUC (Area Under the Curve) as your main performance metric. AUC helps balance identifying qualified leads while keeping false positives in check [8][9].

After training, focus on rigorous testing and set the stage for deployment.

Testing and Deploying Your Model

To understand your model's predictions, use SHAP (SHAPley Additive exPlanations) values. These values pinpoint which features influence a lead's score, making the results more transparent for your sales team [9]. Define scoring thresholds to guide actions: for example, leads scoring above 85 should receive immediate outreach, while those scoring between 50 and 70 can enter nurturing campaigns [5].

Deploy the model via an inference endpoint that integrates directly with your CRM. Tools like SalesMind AI allow sales teams to view real-time scores and see the factors influencing each lead’s ranking. To keep your model relevant, set up automatic retraining every 15 days or whenever you adjust engagement tracking configurations. Keeping the model updated ensures it adapts to evolving buyer behaviors [5][8]. For example, in 2024, Linda Johnson at Workforce Software reported a 121% boost in in-market account engagement over six months by implementing AI-driven scoring with regular updates [5].

| Implementation Phase | Key Activities | Primary Tools/Metrics |

|---|---|---|

| Data Preparation | Cleaning, feature engineering, ETL workflows | CRM, LinkedIn, AWS Glue [5][9] |

| Model Training | Historical data splitting, weighting factors | SageMaker Autopilot, AutoML [9] |

| Evaluation | Performance testing, interpretability | AUC Score, SHAPley values [8][9] |

| Deployment | Real-time inference, CRM integration | SalesMind AI, SageMaker Endpoints [8][9] |

| Optimization | Threshold setting, auto-retraining | 15-day refresh cycle [5][8] |

Using SalesMind AI to Optimize Ensemble Models

Once your ensemble model is up and running, the next hurdle is keeping it accurate as buyer behavior evolves. SalesMind AI steps in with tools designed for ongoing refinement and precision tuning, helping you maintain top-notch lead scoring without needing constant manual tweaks. With its dynamic capabilities, SalesMind AI adjusts and fine-tunes your model in real time.

Continuous Model Improvement and Real-Time Updates

SalesMind AI takes the guesswork out of lead scoring by assigning an Engagement Score from 0 to 100 based on real-time lead tracking. This eliminates the need for manual analysis [11]. The score updates automatically as leads interact with your outreach efforts, ensuring your ensemble model always operates with the most current data. Additionally, the platform syncs lead details - like job descriptions and interaction history - directly to tools like Google Sheets for easy monitoring [10][12].

Transparency is a key feature here. The system provides a detailed breakdown of lead scores in the campaign's "Activities" tab. This allows you to see which factors your ensemble model is prioritizing and make adjustments to your persona criteria if needed [10][12]. For instance, if you notice the model disproportionately penalizing leads with slightly less experience than your target, you can tweak the filters to include these near-threshold prospects. Automated workflow triggers, such as the Conversation Reply feature, can also be set to activate immediately when a lead’s status changes. This ensures that high-scoring leads receive timely follow-ups while their interest is still strong [10][11].

With these tools in place, SalesMind AI also helps you manage the trade-off between precision and recall.

Balancing Precision and Recall

Ensemble methods are great for minimizing bias and variance, and SalesMind AI builds on this by offering ways to fine-tune lead scoring even further. You can set specific exclusion criteria within personas to automatically disqualify leads that don’t align with your ideal profile [11]. Leads that meet these exclusion rules are assigned a score of zero, cutting down on false positives [12].

At the same time, the platform employs intelligent score reduction logic. Instead of outright disqualifying near-threshold leads, it lowers their maximum score. This approach keeps these prospects in your pipeline but at a lower priority, improving recall without overwhelming your sales team with unsuitable leads [10]. To make prioritization easier, visual "temperature" tags indicate engagement levels, adding a qualitative layer to the numerical scores [11]. Regularly reviewing the scoring rationale in the Activities tab allows you to spot overly restrictive tendencies in your model and make adjustments to maintain a better balance [10][12].

Conclusion

Ensemble learning brings together multiple models to tackle bias and variance, resulting in more reliable lead scoring. Industry benchmarks reveal that these methods can boost AUC-ROC scores by 0.05–0.15 points compared to baseline models. Additionally, studies highlight an 18% rise in sales-qualified leads and accuracy improvements ranging from 15–25% in sales datasets[1][13][14][15].

To put ensemble methods into action, you'll need clean, well-prepared data, a variety of trained models, and a deployment strategy tailored for managing complex B2B sales pipelines. These methods are particularly effective at achieving high F1-scores, even when working with imbalanced datasets[1][15].

SalesMind AI simplifies this process by automating the deployment of ensemble models, delivering real-time lead scoring that updates every 24 hours using fresh LinkedIn interaction data. By analyzing signals like profile views and messaging responses, SalesMind AI achieves a 92% precision rate in lead scoring. This translates to a 25% improvement in sales alignment. Teams leveraging ensemble learning with SalesMind AI report 20–40% higher lead-to-conversion rates, turning raw prospects into revenue with precision and scalability.

For a quick start, focus on pre-built ensemble pipelines and targeted feature engineering, such as integrating LinkedIn engagement scores. This approach enables you to gain a competitive edge with minimal setup[14][16]. Combine these tools with SalesMind AI's automation to efficiently transform prospects into tangible revenue.

FAQs

How do I choose between bagging, boosting, and stacking for lead scoring?

Choosing between bagging, boosting, and stacking comes down to the specifics of your lead scoring requirements and the nature of your data.

- Bagging works well if you're dealing with high-variance models and want to reduce variance while avoiding overfitting.

- Boosting is ideal when accuracy is your top priority, especially with complex datasets.

- Stacking shines when you need to combine different models to achieve improved overall performance.

Your decision should reflect the complexity of your data, the resources available, and your accuracy objectives.

What’s the minimum amount of labeled lead data I need to start?

To build AI models for lead scoring, you'll generally need a decent amount of labeled data to get started. Experts often suggest beginning with 300 to 500 labeled leads. This range provides enough data to generate reliable predictions and deliver useful insights.

How can I explain a lead score to my sales team?

A lead score is a number that reflects how likely a lead is to become a customer. This score is determined through machine learning, which evaluates various factors like demographics, behaviors, and firmographics.

Here's how it works: higher scores (like 80–100) indicate that a lead has a strong chance of converting, while lower scores suggest they’re less likely to make the jump. By keeping these scores updated, your team can zero in on the most promising leads, saving time and improving overall efficiency.